8 mins

Dedicated Internet Access for AI and LLM Companies

AI companies run on data. Getting that data where it needs to go, fast, reliably, and without interruption, is what keeps models training, APIs responding, and products shipping on schedule.

Dedicated internet access (DIA) is a private internet connection used exclusively by your organization. Unlike standard business broadband, where bandwidth is shared across many customers, DIA gives you guaranteed bandwidth, consistent speeds, and a contractual uptime commitment from your provider. For AI and LLM companies moving large datasets, serving real-time inference APIs, and syncing data across cloud environments, that reliability is not a nice-to-have. It is a core infrastructure requirement.

Most internet connections are built for general business use. Email, video calls, cloud-based apps: workloads that are light, bursty, and forgiving of occasional slowdowns. AI workloads are none of those things. They are sustained, high-volume, and sensitive to both speed and consistency. DIA is the dedicated internet service built to handle that.

Recommend reading: TOP DIA Providers List Recommended By Expert

What Kind of Internet Does an AI or LLM Business Need?

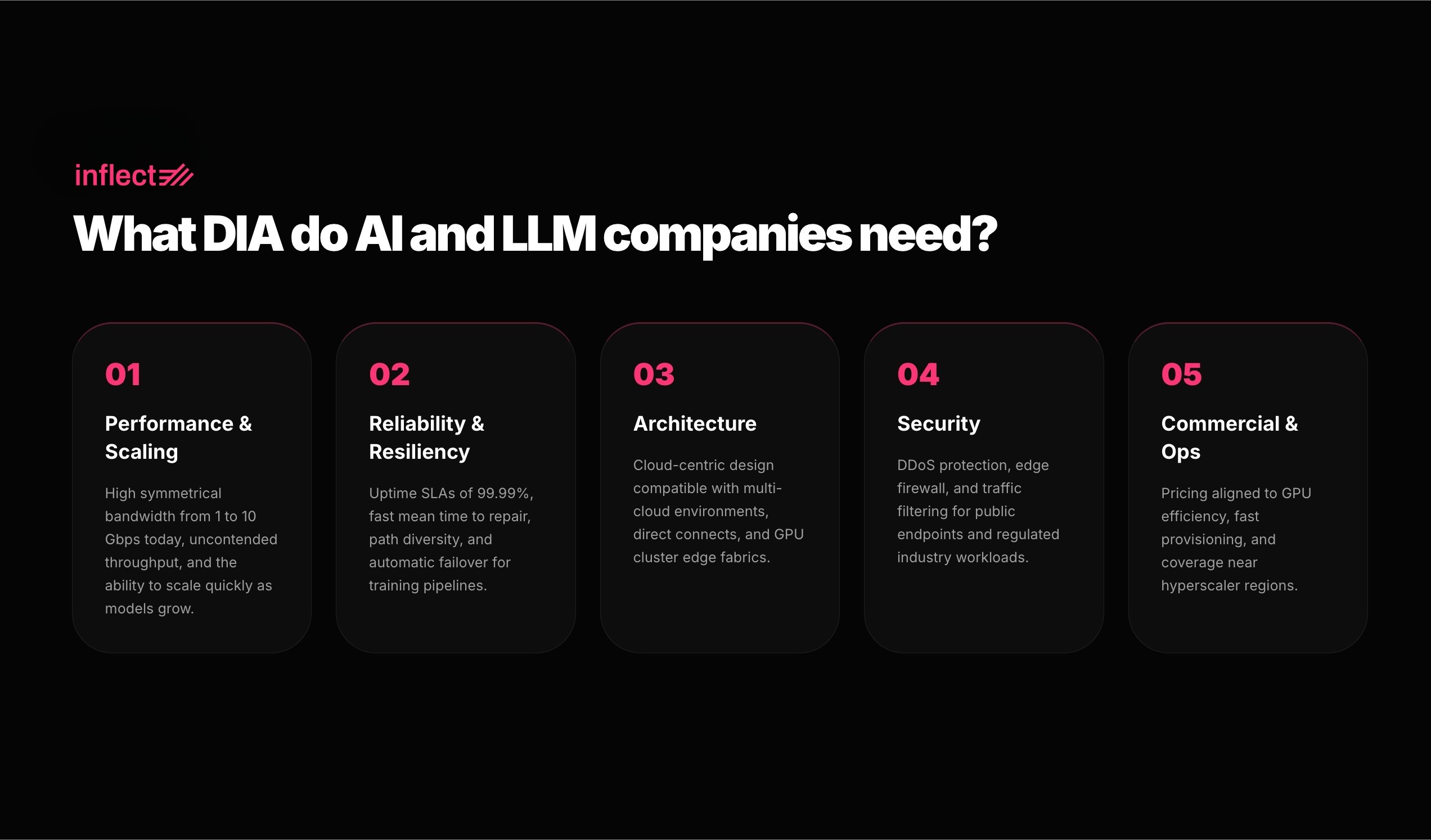

The right DIA for AI or LLM Businesses is high-capacity, reliable, and built for data-intensive applications. The table below breaks down what AI and LLM teams consistently look for when researching and choosing the dedicated internet providers.

Dimension | What AI and LLM Buyers Expect |

Performance and Scaling | High symmetrical bandwidth from 1 to 10 Gbps today, uncontended throughput, and the ability to scale quickly as models and datasets grow. |

Reliability and Resiliency | Uptime SLAs of 99.9 to 99.99 percent, fast mean time to repair, path diversity, and automatic failover to keep APIs and training pipelines online. |

Architecture | Cloud-centric design compatible with multi-cloud environments, direct cloud connects, SD-WAN, and the edge connecting GPU clusters and data fabrics. |

Security | DDoS protection, edge firewall, and traffic filtering for public AI API endpoints, plus governance-friendly connectivity for regulated industry workloads. |

Commercial and Operations | Pricing aligned to GPU utilization efficiency, fast provisioning, flexible contract terms, and coverage near the hyperscaler regions where AI infrastructure runs. |

DIA is the stable edge that feeds and drains high-performance AI compute fabrics without becoming the bottleneck.

Why AI and LLM Companies Need Dedicated Internet Access

It helps to understand where internet connectivity actually fits in an AI platform. The heavy lifting of model training (GPU-to-GPU communication, moving data between processors) happens internally within the data center over ultra-fast private networks. That traffic never touches the public internet.

But a significant amount of traffic does go in and out over the internet, and it matters just as much to day-to-day operations. Raw training datasets arrive from external sources. Customer-facing APIs (the connections that let your users actually interact with your AI product) send and receive data continuously. Monitoring tools stream performance data to cloud platforms. Model files sync across environments.

When the internet connection handling all of that is a shared line with no performance guarantees, the cracks show quickly. Datasets take longer to arrive. API responses slow down under load. Monitoring gaps appear. None of this requires a full outage to cause real damage. Consistent, minor degradation on a shared connection is enough to slow down operations and frustrate users.

A dedicated internet connection removes that uncertainty. The bandwidth is yours alone, the speeds are consistent, and the provider is contractually accountable for performance.

How Poor Internet Connectivity Affects AI-Driven Operations

The impact of under-provisioned internet on AI operations is easy to underestimate until you see it play out. According to IDC Research cited by Lumen Technologies, 47 percent of North American enterprises reported that generative AI had a significantly larger impact on their connectivity strategy in 2024, nearly double the 25 percent who said the same in mid-2023. (Source: Lumen Technologies,2025)

Here is what that pressure looks like in practice. Training jobs get queued waiting for datasets that are still downloading over a slow connection. Customer-facing AI products respond inconsistently because shared internet traffic spikes during peak hours. Engineering teams lose visibility into platform health because monitoring data is delayed or dropping. Each of these is a productivity and reliability problem, not just a network problem.

The cost is also more visible than most teams expect. GPU compute is expensive. Time spent waiting for data to arrive is time that compute is sitting idle. A dedicated internet connection with guaranteed bandwidth and clear service level agreements keeps data moving and teams focused on building, not troubleshooting.

The bandwidth is yours alone. The speeds are consistent. And your provider is contractually accountable for delivering both.

Common Dedicated Internet Access Use Cases for AI and LLM Companies

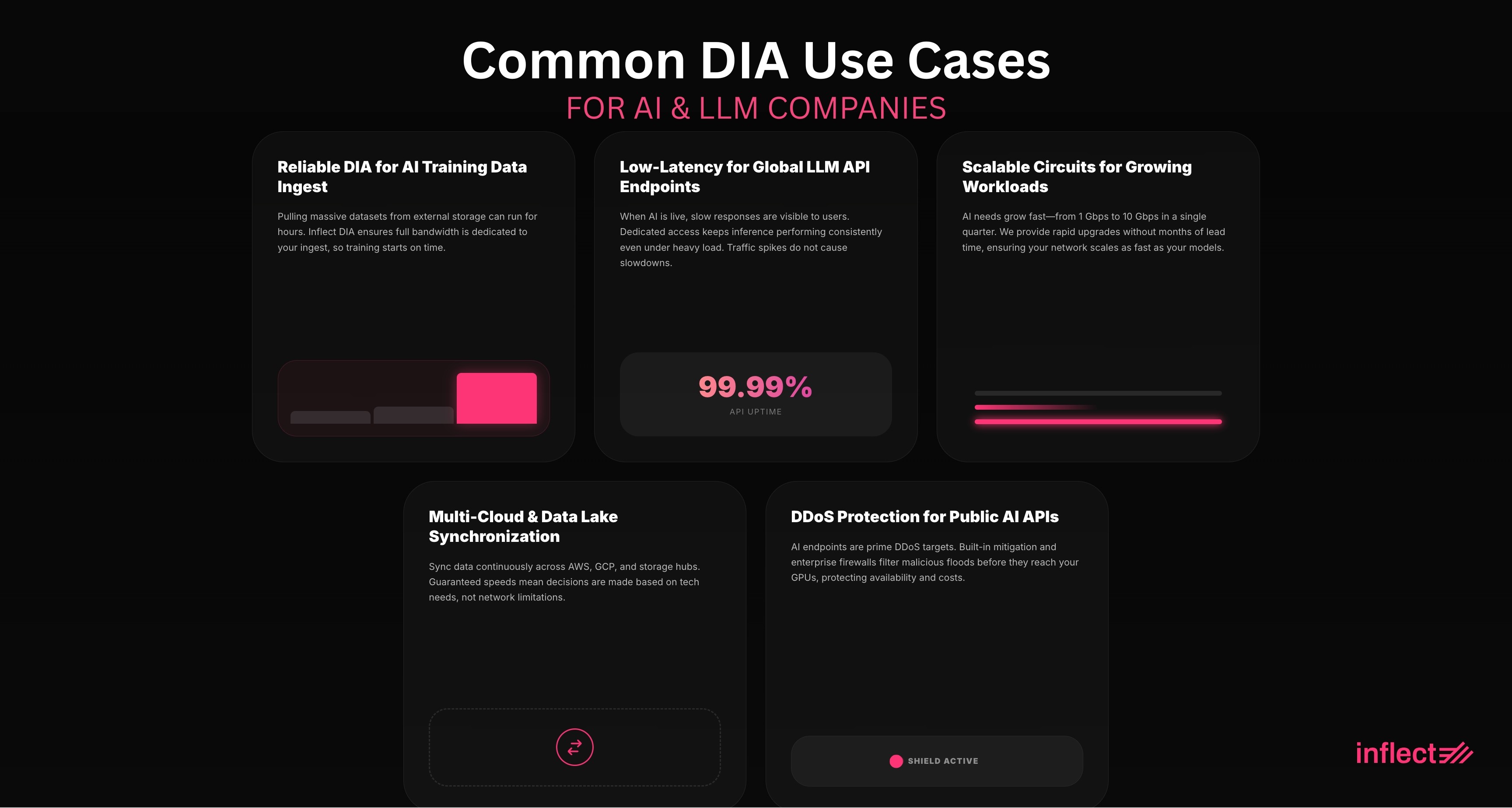

The most common DIA use cases for AI and LLM companies are: training data ingest from external sources, Global LLM API Endpoints, Growing AI Workloads, Multi-Cloud AI Architectures and Data Lake Synchronization, and Public AI and LLM API Traffic

Reliable DIA for AI Training Data Ingest from External Sources

Before a model can train, the data it needs has to arrive. For most AI teams, that means pulling large files and datasets from external sources including cloud object storage, partner data feeds, and third-party vendors, over the internet. That is a sustained, high-volume download that can run for hours at a time.

On a shared internet connection, that download competes with everything else on the line. On a dedicated internet connection, the full bandwidth is available for the duration. For AI teams running multiple training jobs or working to tight schedules, the difference between a 1 Gbps dedicated line and a congested shared connection can mean the difference between a training job that starts on time and one that waits.

Low-Latency DIA with 99.99% Uptime for Global LLM API Endpoints

When your AI product is live and customers are using it, the internet connection serving those requests is part of the product experience. Slow responses, dropped connections, or downtime are not infrastructure problems that stay invisible. Users feel them immediately.

A dedicated internet connection keeps your inference API (the interface that processes customer requests to your AI) performing consistently even under heavy load. Because the bandwidth is guaranteed and not shared with other customers, traffic spikes do not cause slowdowns. McKinsey projects inference workloads will grow at 35 percent annually through 2030, eventually surpassing training as the dominant AI workload. (Source: McKinsey, 2024.) The connectivity infrastructure supporting customer-facing AI today is a long-term investment, not a temporary fix.

Scalable DIA Circuits with Rapid Bandwidth Upgrades for Growing AI Workloads

AI infrastructure rarely stays the same size for long. A team running experiments at 1 Gbps can find themselves needing 5 or 10 Gbps within a single quarter once a model moves from testing to production. Bandwidth requirements that double or triple in a year are common, not exceptional.

That is why scalability is a non-negotiable criterion when evaluating dedicated internet providers. The ability to upgrade bandwidth quickly, without months of lead time or a full contract renegotiation, is what separates providers built for modern AI workloads from those that are not. Zayo, one of North America's largest fiber network providers, reported fiber demand surging 12 to 18 times for AI deployments and forecasts U.S. data center capacity growing 2 to 6 times by 2030. (Source: Zayo, 2025) Customizable bandwidth options with fast provisioning are now a standard expectation, not a premium feature.

DIA for Multi-Cloud AI Architectures and Data Lake Synchronization

Most production AI systems do not live in a single cloud environment. Training might run on one provider, inference on another, and data storage somewhere else entirely. Keeping all of those environments in sync requires moving data across them continuously, and the reliability of that data movement depends on the quality of the internet connection doing the work.

A dedicated internet line gives each of those data flows guaranteed bandwidth and consistent speeds, so architecture decisions get made based on what is technically best rather than what the network can reliably handle that day. Teams connecting offices and development centers to cloud-based apps and AI tools also benefit. Employees get the same fast, low-latency experience they expect from the products they use every day.

DIA with DDoS Protection and Enterprise Firewall for Public AI and LLM API Traffic

Any AI product with a public-facing API is a potential target for DDoS attacks: coordinated floods of traffic designed to overwhelm your connection and take your service offline. The scale of this threat is not hypothetical: Cloudflare blocked 21.3 million DDoS attacks in 2024, a 53 percent increase over the previous year, with API infrastructure increasingly in the crosshairs. (Source: Cloudflare, 2025.)

A dedicated internet service with built-in DDoS protection and enterprise firewall capabilities filters malicious traffic before it reaches your infrastructure. For AI companies serving regulated industries including finance, healthcare, and government. Providers that also carry SOC 2 and ISO 27001 compliance documentation simplify the procurement and vendor review process with enterprise customers.

What to Look for in a Dedicated Internet Provider for AI and LLM Infrastructure

Not all dedicated internet providers are built for the demands of AI infrastructure. Here are the criteria that matter most when evaluating your options.

Guaranteed bandwidth from 1 Gbps to 10 Gbps with room to grow

AI workloads need high, sustained throughput for data ingest, API traffic, and cloud synchronization. Start by confirming the provider can meet your current needs, then verify they can upgrade you quickly as those needs increase, up to 10 Gbps and beyond.

Symmetrical upload and download speeds

Many dedicated internet connections are asymmetric by default, faster on the download side than upload. AI workloads frequently push significant data outbound too, so confirm the provider offers symmetrical speeds at the tiers you need.

Service level agreements that cover more than just uptime

An uptime SLA tells you the connection will be on. Latency, jitter, and packet loss SLAs tell you it will actually perform. For customer-facing AI products where response time directly affects user experience, performance SLAs are non-negotiable.

On-net coverage near your cloud providers

A dedicated fiber internet circuit physically located close to AWS, GCP, or Azure reduces the distance your data has to travel, which means lower network latency for every API call and data transfer. Ask providers specifically about on-net presence near the cloud regions you use.

DDoS protection and firewall capabilities

Public-facing AI APIs are attractive targets for attacks that can take your service offline and generate unexpected bandwidth costs simultaneously. Look for DDoS mitigation and enterprise firewall options built into the service rather than bolted on separately.

Fast provisioning and customizable bandwidth

AI projects move fast. A provider that needs months to upgrade your dedicated internet connection, or requires a full contract renegotiation to adjust capacity, will slow you down. Prioritize providers with flexible, fast processes for scaling up.

Frequently Asked Questions: Dedicated Internet Access for AI Companies

What bandwidth does a dedicated internet connection need for AI training data ingest?

It depends on the scale of your workloads, but 1 Gbps is a reasonable starting point for small-to-medium AI teams, with 5 to 10 Gbps being typical for larger pipelines moving significant volumes of training data daily. The key factor is that your internet speed needs to keep pace with your training schedule. If large files are still downloading when a job is supposed to start, the connection is undersized.

How does dedicated internet access improve LLM inference API performance?

On a shared internet connection, your traffic competes with everyone else on the same line, which means your API can slow down precisely when demand is highest. A dedicated internet connection gives you private, guaranteed bandwidth, so high-traffic periods do not affect response times. The result is consistent API performance that you can back with service level agreements and communicate clearly to customers.

Can a dedicated internet circuit scale as AI workloads grow from PoC to production?

Yes, if you choose the right provider. The key questions to ask are: how long does a bandwidth upgrade take, and does adjusting capacity require renegotiating the contract? Many organizations find their internet speed requirements double or triple within a year as AI workloads move from experimentation to production. A provider with customizable bandwidth tiers and fast provisioning processes handles that growth without disruption.

What security features should a DIA provider offer for public-facing LLM API endpoints?

At minimum: DDoS attack mitigation, enterprise firewall options, and traffic filtering. Beyond that, look for integration with zero-trust security frameworks, the ability to assign static IP addresses for secure endpoint identification, and compliance documentation such as SOC 2 and ISO 27001 if your customers are in regulated industries and will ask for it during procurement.

Source Dedicated Internet Access for Your AI Infrastructure

Guaranteed bandwidth from 1 to 10 Gbps and above. 99.99% uptime SLAs. DDoS protection and enterprise firewall. On-net coverage near the cloud regions where your AI infrastructure runs.

About the Author

Chanyu Kuo

Director of Marketing at Inflect

Chanyu is a creative and data-driven marketing leader with over 10 years of experience, especially in the tech and cloud industry, helping businesses establish strong digital presence, drive growth, and stand out from the competition. Chanyu holds an MS in Marketing from the University of Strathclyde and specializes in effective content marketing, lead generation, and strategic digital growth in the digital infrastructure space.

Contact:

Email: